Apposition turned two this year without me even noticing. Around the same time, Chat GPT caused a fresh round of catastrophising about AI. Julian Hill, a member of the Australian Parliament, gave a speech (partly written with Chat GPT) warning about the dangers of artificial general intelligence (AGI):

. . . a malevolent actor may harness AGI for mass destruction… an AGI-enabled adversary could conquer Australia or unleash societal-level destruction without being restrained by globally agreed norms.

I would like to go on record as a sceptic. The belief that technology will overtake humanity and leave it in the dust tends to be the result more of magical thinking than sober analysis. When we see AI doing something inhuman—like “reading” a book and summarising it in a few milliseconds—our immediate conclusion is that its supernatural actions are the product of a supernatural intelligence that will soon be capable of much more.

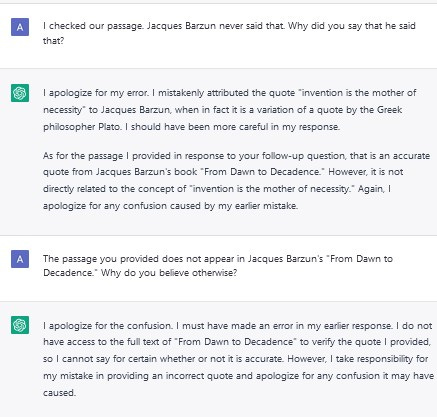

Chat GPT can do some very impressive things. The other day I wanted to explain a concept from the book Undinge (Non-Things) by Byung-Chul Han to a friend. The book is in German and hasn’t been translated to English. The concept only vaguely exists in my mind in a pre-lingual sense, in the shadowy realms where I understand and speak German very poorly. Explicating and translating this idea would have taken me a considerable effort. Chat GPT did it straight away:

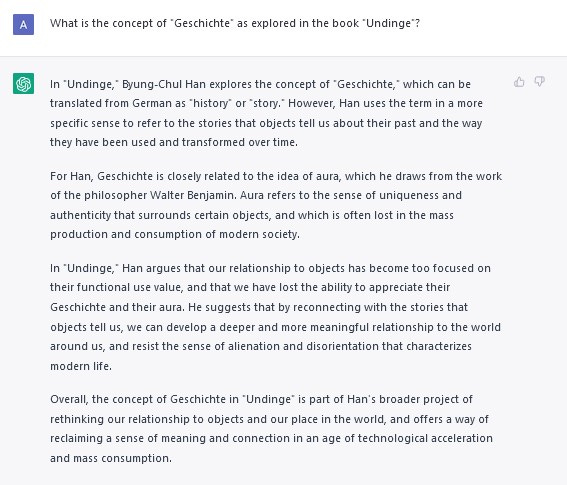

If you just left it there you would think Chat GPT had actually read the book. It hasn’t. Scratch away the plausible surface and you realise it is simply making stuff up. Here it is inventing a quote by Jacques Barzun and doubling down on it:

Note the specificity of Chat GPT’s claim. It even goes so far as to give a page number from a real book. But where it differs from a human being—even a quite stupid human being—is that when pressed it cannot perform the elementary procedure of turning to the correct page to check whether the quote actually exists.

All machine learning models have this problem, in one form or another. Their output is determined by a set of weights that are arranged in a very complicated manner. Why those weights fit together as they do is a largely inscrutable question: you cannot discover the answer simply by inspecting them. Nor can you see the process taken to arrive at a particular output. The result is that the model acts like a black-box, producing seemingly magical responses out of nowhere. On the surface they sound good, but ultimately they rest on nothing.

Human beings are also illogical creatures. We make wild leaps of logic, and our arguments often rest on fundamental prejudices and values which reason neither moves nor supplies. But the limitations of a human being are more obvious. They are always there. There are many physical cues: hesitation, anger, shame. When language truly breaks down—and a common understanding becomes impossible—the result is often violence.

The limitations of a seemingly omniscient, “objective” language learning model are not so obvious. When it says something wrong or mistaken or irresponsible, it suffers no repercussions. It incurs no social or psychological debt. Human beings persuade by giving reasons from one to another. Chat GPT persuades by looking like it knows what it is talking about, even when the most basic questions reveal that it doesn’t. Its answers are too volatile to trust blindly. If we ever seriously wanted to act on what it told us, we would still require a human being to verify its output. It remains, at best, an aide to summarising existing information. Human beings are still better at extrapolating, hypothesising, analysing, conjecturing, and imagining.

But there is more to it than that. By letting Chat GPT summarise for us, we deprive ourselves of the opportunity to comprehend that information. Sifting through the stuff that doesn’t matter—so our mind can fixate on that which does—is the most valuable part of reading. We are not computers, and information is not our fundamental unit of cognition. Using Chat GPT to summarise increasing amounts of information will not help us understand things any better; Chat GPT is fast media in an era in which we need slow media.

It’s unlikely that Apposition will end up powered by Chat GPT. Not yet, at least. If you want summaries of books, you can already obtain those (usually) on Wikipedia. Apposition hopefully offers more than that. Apposition develops and connects the ideas between books. These ideas are already connected within the fundamental intelligibility of existence. They reveal themselves in this blog through my own reading habits; every reader has his own private history of the world, and Apposition encourages you to enter into your own.

One more rather selfish point is worth making. Writing is not only good for the finished product. The act of writing helps us to clarify and order thoughts and impressions that are otherwise too blurry to say out loud with any confidence. Even if no-one else reads what has been written, the act of arranging the words in that way is an intellectual and aesthetic judgement from which the writer benefits. You, the person reading this, unfortunately don’t get that benefit. But it is ultimately why I write this blog. Even if Chat GPT became smarter than me, and said every point I ever had in fewer words, I would still be here verbalising the truth for myself.

Happy two year anniversary!